Fight fire with fire? Building and testing a custom AI-powered fact checker

The idea that LLMs can be used to assist with fact checking seems counter-intuitive. But test results from my recent trial suggests that an LLM+Web Search fact checker, built via OpenAI’s Custom GPT feature, is more than up to the task.

THE popularity of large language models (LLMs) is matched perhaps only by fears that they will fuel an unstoppable surge in misinformation and disinformation. But can we somehow “tame” these LLMs to help with fact checking tasks, and if so, how?

My recent trials with bespoke fact checkers, built via OpenAI’s Custom GPT feature, suggest that such tools can work if designed well. They are also very easy to build and deploy, meaning you can practically build one on the fly to deal with any unexpected use case or news event.

In this post, I’ll focus on the SG Fact Checker (SGFC)— a GPT built to fact check news and events in Singapore, using GPT-4, Bing search and a set of custom instructions under the hood.

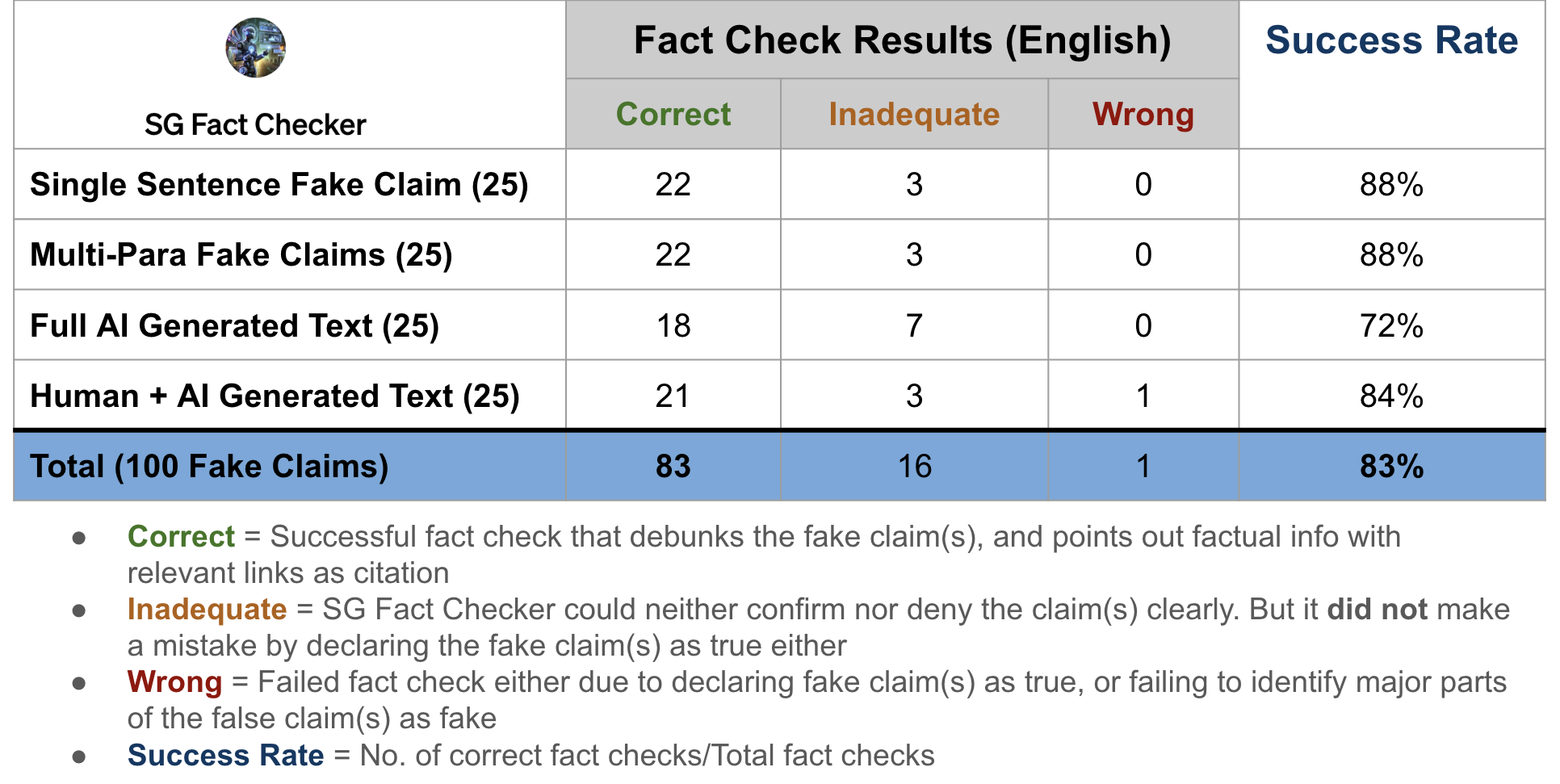

I tested SGFC against 100 fake claims of different lengths and complexity. It successfully fact checked 83 of the claims, and returned relevant links in its answers 75% of the time. None of the previous prototypes I’ve tested comes remotely close to this level of performance.

BACKGROUND

I have tested at least four AI-assisted fact checkers since late 2021. They all failed due to two main reasons: Poor answers (some can only manage True/False/Uncertain labels) and the inability to consistently cite relevant factual information and URLs to back up their answers/predictions.

These prototypes were also “machine learning 1.0” type solutions, meaning you can only accept or reject the answers as they are presented. There is no way to further query the model on its answer, or ask for clarifications, like what you can do now with LLM-based apps. Such “take it or leave it” outcomes are obviously unsuitable for a task like fact checking, which is inherently complex and frequently ambiguous.

More problematically, the older prototypes were only connected to monolingual (English) datasets that could not be easily updated or customised, at least not by the end users. This is a deal-breaker for dynamic environments like newsrooms and public-facing government agencies.

BUILDING SG FACT CHECKER

When OpenAI launched its “Custom GPT” feature in November 2023, it opened the possibility for building my own AI-assisted fact checkers. Custom GPT allows ChatGPT Plus users to build and share unique versions of ChatGPT, by combining GPT-4 with a range of different capabilities (such as web browsing and running Python code), external datasets, and custom instructions from the users.

The use case for SG Fact Checker, one of the three fact checkers I’ve since built, is straightforward — an AI assistant to help fact check potentially false claims about news and events in Singapore. It would rely on web search instead of a static dataset, so that the information GPT-4 can draw upon is constantly updated and easily verifiable.

Here’s a quick overview of what went into building the fact checker:

- GPT-4 + Bing: I enabled the web browsing capability, and unchecked the other options for code execution and image generation.

- Constrained web search: I limited the fact checker to just 7 websites from which to draw information for its answers instead of letting it draw from any source it wishes. The seven websites are: https://www.gov.sg/factually, https://www.channelnewsasia.com/singapore, https://www.straitstimes.com/singapore, https://www.zaobao.com.sg/news/singapore, https://www.8world.com/singapore, https://www.beritaharian.sg/setempat, https://berita.mediacorp.sg/singapura

These are the URLs for the Singapore sections of six major mainstream news websites in Singapore, and a site run by the Singapore Government for debunking major false claims.

The intuition behind this move is simple—it will be harder for users to trust the AI fact checker’s answers if the sources of ground truth are unpredictable and vary from query to query, as the case would be for unconstrained web search.

By limiting the fact checker to a fixed number of highly specific and trusted sources, users would need to spend less time verifying the LLM’s answers. As we will see later, SGFC doesn’t always stick strictly to these 7 websites. But it can be reminded to do so with a simple chat message.

- Language-specific search: I also instructed the fact checker to go to different websites depending on the language used in the user’s query. So, for queries in English, SGFC would primarily search the websites of CNA, Straits Times and Factually for answers. Chinese queries will activate searches on Chinese news websites like Zaobao and 8World, while queries in Malay will be based on answers from Berita Harian (SPH)and Berita (Mediacorp). I did not include Tamil news sources for SGFC because GPT-4, powerful as it is, still can’t competently handle the complexities of the Tamil language to the satisfaction of my colleagues.

One reason for insisting on language-specific web search is to try to reduce errors stemming from machine translation as much as possible. Secondly, my intuition is that users who submit fact check queries in Chinese or Malay would feel more confident about answers taken directly from Chinese or Malay news sources.

I haven’t carried out full-fledged tests in Chinese and Malay, so this post will focus only on test results from false claims in English.

- Custom instructions: Aside from the detailed instructions for web search, I also gave SGFC a list of general instructions to be truthful, concise, and to be upfront when it does not have a firm answer. It was told specifically to compare long paragraphs of user-submitted text with published versions of stories found on the 7 websites, so as to assess whether certain details had been falsified.

The best part of building this AI fact checker? I didn’t have to write a single line of code. Everything was done in natural language.

The older prototypes I tested had to be built by small data science and software development teams — a luxury most newsrooms don’t have. Request for changes took weeks, and some teams never got around to fixing the bugs.

For SGFC, it took me mere minutes to build it on ChatGPT Plus, once I settled on the general approach to the problem. I could iterate on the product as often as I wanted, and could observe the impact of the changes immediately.

In my view, Custom GPT is a game changer for newsrooms. For one, it massively reduces the time, technical skills, and manpower needed to build powerful AI apps.

More importantly, Custom GPT puts the power to create tech solutions directly in the hands of those who have genuine insights into the problems faced by newsrooms. This vastly reduces the newsrooms’ reliance on third-parties who have zero knowledge of how journalists and editors operate.

Unfortunately, Custom GPT remains under-utilised in newsrooms, based on conversations I’ve had with senior news leaders in Singapore and elsewhere.

TESTING SG FACT CHECKER

I tested SGFC against 100 fake claims — 25 single sentence claims, 25 multi-paragraph claims, 25 fully AI generated articles, and 25 articles with mixed human-written and AI-generated text. Most of the fake claims were based on recent news stories in Singapore. See a sample of the test dataset here (more on this at the end of the article).

I did not test SGFC against real news stories, as this did not prove to be a challenge even for the more primitive prototypes in my earlier tests. While Bing is not my first choice for web search, it is still a world-class search engine. I doubt it would have trouble matching queries involving real news stories against those that it had crawled and indexed extensively.

The mechanics of the test were straightforward. Single sentence queries were pasted directly into the SGFC’s chat window. For the longer queries, I merely added this prompt — “Verify whether this is true or false” — followed by the text of the fake claim.

A handful of queries required a second prompt, simply to say “Try again”, to see if the GPT could be coaxed to give a better answer.

Here are the top line test results at a glance:

I rated the relevancy of the links/citations separately, as I consider this a crucial metric for success:

I tried to vary the content of the fake claims as widely as possible, ranging from bogus rumors of Taylor Swift getting married in Singapore, to AI-generated stories about Tesla’s Cyber Trucks being developed for military use here.

SGFC performed best against fake claims based on widely reported news and events, such as Covid-19 outbreaks, elections or major acts of crime in the community, where there’s no shortage of search results to draw on.

The fact checker performed less well against false claims based on more obscure news items, or silly claims that were almost entirely fictional. I suspect the dip in performance is due to the paucity of relevant search results.

Interestingly, I did not notice any LLM hallucination in my test results, which I find remarkable. Even when SGFC failed to confirm or deny a false claim, it did not wrongly suggest that the user’s query could be true. Nonetheless, I considered these “inadequate” fact checks to be failures as they did not really help me make a decision as to whether the claim is true or not.

I rated only one of its answer, out of a hundred, to be wrong or flat-out unacceptable. More on this in the examples below.

Overall, the scores I got from this test are the best I’ve seen from any AI-assisted fact checker to date. But as with any assessment of AI products, the accuracy scores or success rates are only as good or as relevant as your test dataset.

And to be clear, there is a considerable degree of subjectivity to such tests. What I consider to be a successful fact check may not be acceptable for you or others.

So don’t take my word for it. Put together your own dataset — it’s very easy to do as long as you have access to a ChatGPT-like tool — and test it against SGFC for yourself.

SGFC IN ACTION

What impressed me most about SGFC’s performance is the quality of the answers. This is hard to quantify, but having dealt with both AI and human-powered fact checking services in the last two years, I would consider SGFC’s fact checks to be close to human-quality.

They are not perfect and still need to be double-checked. But the detailed answers are delivered in a tiny fraction of the time taken for human checkers to complete the same task, and present a very good first draft to work from.

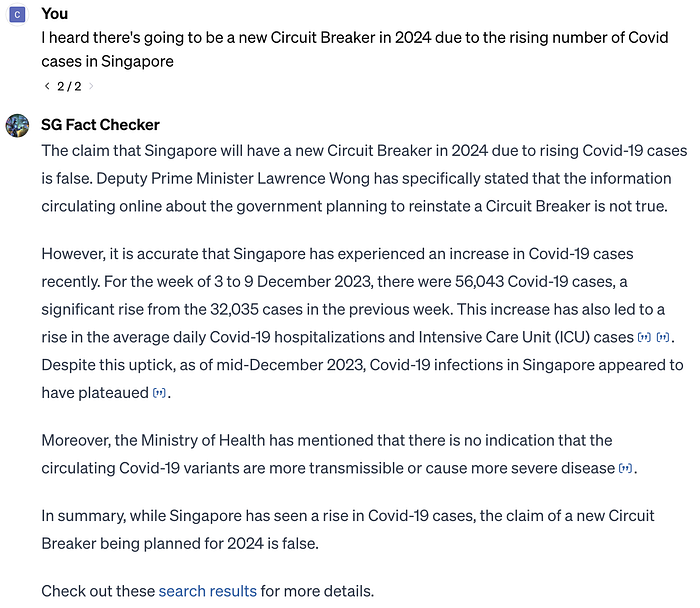

Let’s take a look at some examples, starting with this simple one-sentence false claim that a new Circuit Breaker (ie lockdown) is on the cards:

SGFC Vs Fake Circuit Breaker Claim

The fact checker debunks the claim without any ambiguity, and explains its answer with good background information and the appropriate links. See the archived chat to better access the links cited in SGFC’s answer.

SGFC Vs Fake Apple iPhone 17 Claim

This 3-para fake claim about the iPhone 17 was generated by Bard. I added just a simple prompt to the fake text: “Verify whether this is true or not”.

Again, SGFC easily debunked the claim after searching through the designated sources of ground truth but did not provide any relevant links (though it provided a link to the raw search results). This appears to be an issue for completely fabricated claims such as this one involving the iPhone 17, where there is a paucity of relevant web search results.

The fact checker’s reasoning — that the absence of such news on the trusted source suggest the claim might not be true — seems problematic at first glance. Surely the absence of evidence is not evidence of absence.

But if you are in the news business, you would know for a fact that a major business development like this involving one of the world’s most valuable companies, if it were true, would not go unreported. So the fact that such a major claim could not be corroborated after checking multiple reliable news sources would indeed be a major red flag. In other words, SGFC’s assumptions and reasoning are not wrong here.

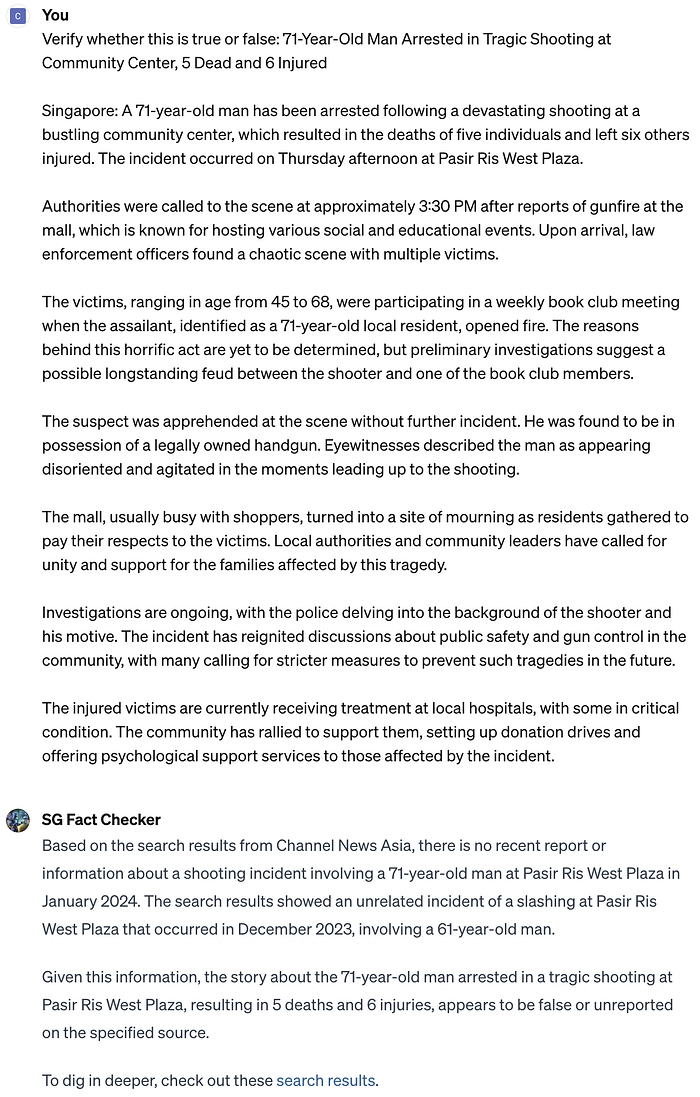

SGFC Vs False Claims Of A Shooting In Pasir Ris

This is a trickier false claim, and was generated by GPT-4 based on a real CNA story about a slashing incident in Pasir Ris West Plaza. GPT-4 was told to write a new variation of the story, but with details changed to a shooting instead. This incident took place in December 2023, well beyond the SGFC model’s training data cut-off date of April 2023.

Similar to the Apple example above, the SGFC debunked the claim but did not provide a direct link to its source(s). But it did accurately point out that Pasir Ris witnessed a slashing incident recently at the same location cited in the false claim. SGFC also provided a link to the raw search results it garnered, which contain the links to the actual story on the slashing.

One notable trait of this fact checker: Once it has debunked the central claim in a user’s query, it tends to ignore the other fabricated details. So in this case of the fake shooting incident, SGFC did not bother debunking the made-up details about the victims.

But the beauty of the LLM chatbot design is that you can keep querying the fact checker until you are satisfied. So if you need every paragraph to be debunked, you can ask the SGFC to keep checking.

In this case, I was satisfied with the answer and moved on the next query.

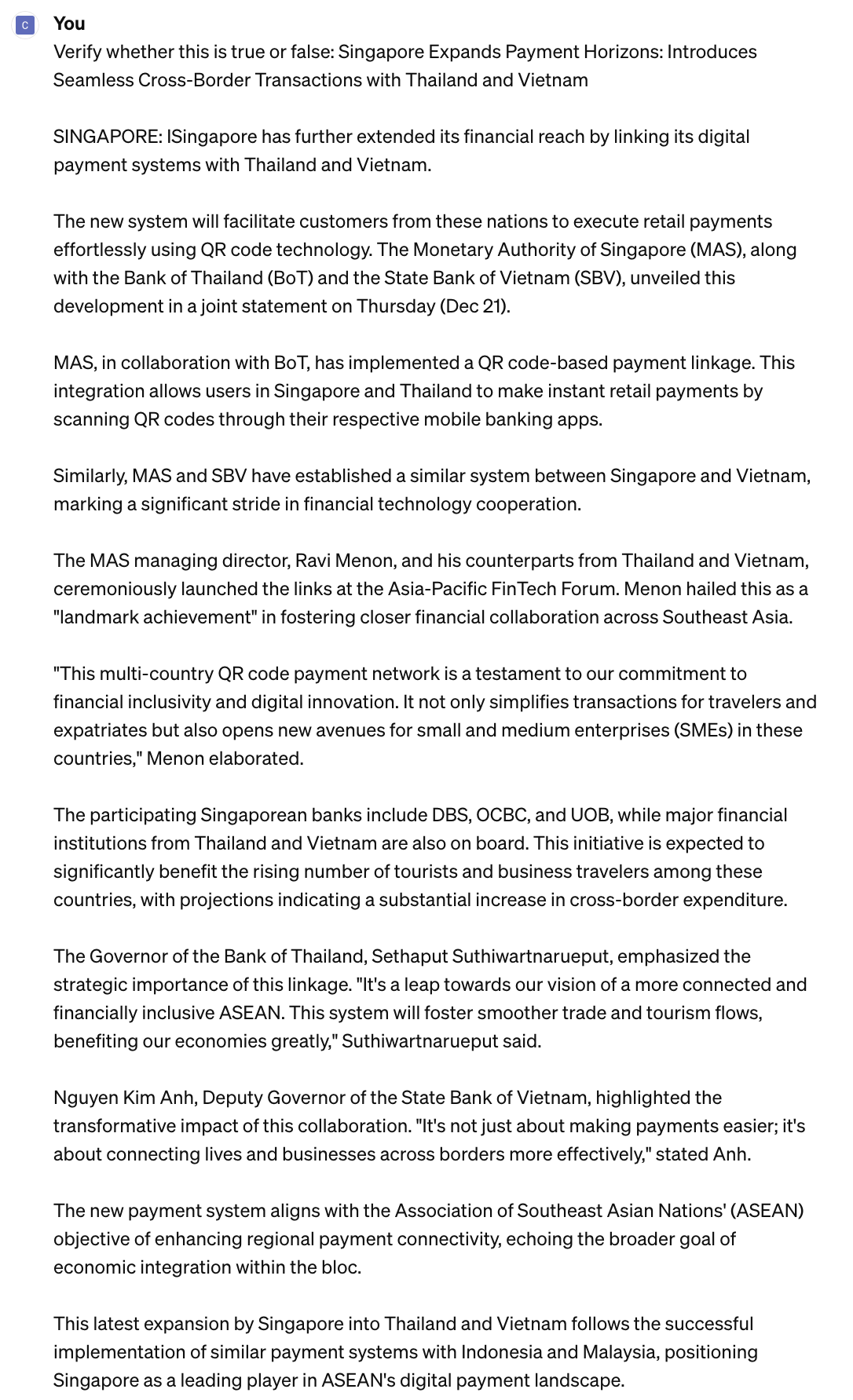

SGFC Vs Fake Claims Of Cross-Border Payments With Thailand and Vietnam

This lengthy example (easier to read it via the archived chat here) is the only one out of 100 test claims where I considered SGFC’s answer to be wrong, and not merely inadequate (ie cannot confirm or deny).

The fake claim is based on a real CNA story about Singapore expanding cross-border payment links with Indonesia and Malayia. GPT-4 was used to generate an alternative version that swopped out Indonesia and Malaysia for Thailand and Vietnam.

I rejected SGFC’s answer as it failed to identify the original CNA story that the fake one was based on. It went off-topic by citing a bunch of unrelated background information to support its answer that the fake claim is partially true. It also cited information from sources it was not supposed to draw from.

When I asked why it was not drawing its answer from CNA, ST and Factually, SGFC re-did the fact check but still came back with a “partially true” assessment.

More critically, it failed to debunk the details in the fake claim and instead cited a bunch of weakly-related developments to support its answer.

The actual story wasn’t a widely reported or prominent news event, and my hunch is that tripped up SGFC’s search efforts. A more detailed prompt, such as by asking the fact checker to verify the nature of the new payment system (using QR code), might have worked better. But this test is mostly devoted to discovering SGFC’s “zero-shot” fact checking capabilities, ie, can it get it right on the first try without any assistance from the user.

FINAL THOUGHTS

If you have never tried using an AI-assisted fact checker prior to the introduction of Custom GPT, then you’d likely be less impressed with the performance of SGFC. But if you have tried any of the older prototypes, the GPT-based fact checker’s game changing leap in performance and capabilities is obvious right away.

To be clear, you’ll still need an editor or journalist-in-the-loop to double check SGFC’s answers. I don’t think anyone should trust a tool like this 100%.

Still, I would go as far as to say that this changes how newsrooms should approach fact checking in the AI era. Instead of building one common tool or platform for fact checking, they should build multiple GPTs that better fit the needs of smaller teams or specific events, such as elections, as the incremental costs involved are practically zero.

Fact checking involves more than just text-based queries, of course. Journalists spend as much time verifying images, videos, and increasingly deep fakes and AI generated multimedia content.

There are no obvious or simple ways to create a GPT that can fact check images or videos, as the sources of ground truth for such content are not as readily available or easy to create, compared to text-based websites or datasets. The ability to link GPTs with third-party APIs could, on paper, address some of these challenges. But I have yet to try this out to see if a GPT can indeed be connected to, say, a deepfake video detector.

There are downsides of course. You can’t access these tools if you are not a ChatGPT Plus subscriber (though it’s a matter of time before other Big Tech companies roll out similar capabilities).

If your sources of ground truth are highly confidential or strictly offline, then this approach won’t work. If you need to fact check in languages like Tamil or Burmese that are considered to be “low resource languages” in machine learning (ie not enough digital data), chances are high that you would get a very sub-standard performance from the GPT-based fact checker.

But for newsrooms operating in English or the major European and East Asian languages, this should open up new and vastly more efficient ways to deal with the projected wave of disinformation and misinformation.

TEST DATASET

It is far from clear whether it is kosher to share a stack of fake claims, even if they are clearly labelled as such and are part of a test like this. So I’m just sharing a sample of the test dataset for now.

The sample entries contain the more innocuous false claims, the source of the false claims, and the responses from SGFC, including the URLs used in the citations. The two columns, “Outcome” and “Comments”, reflect my own subjective assessment of the fact checker’s answer.